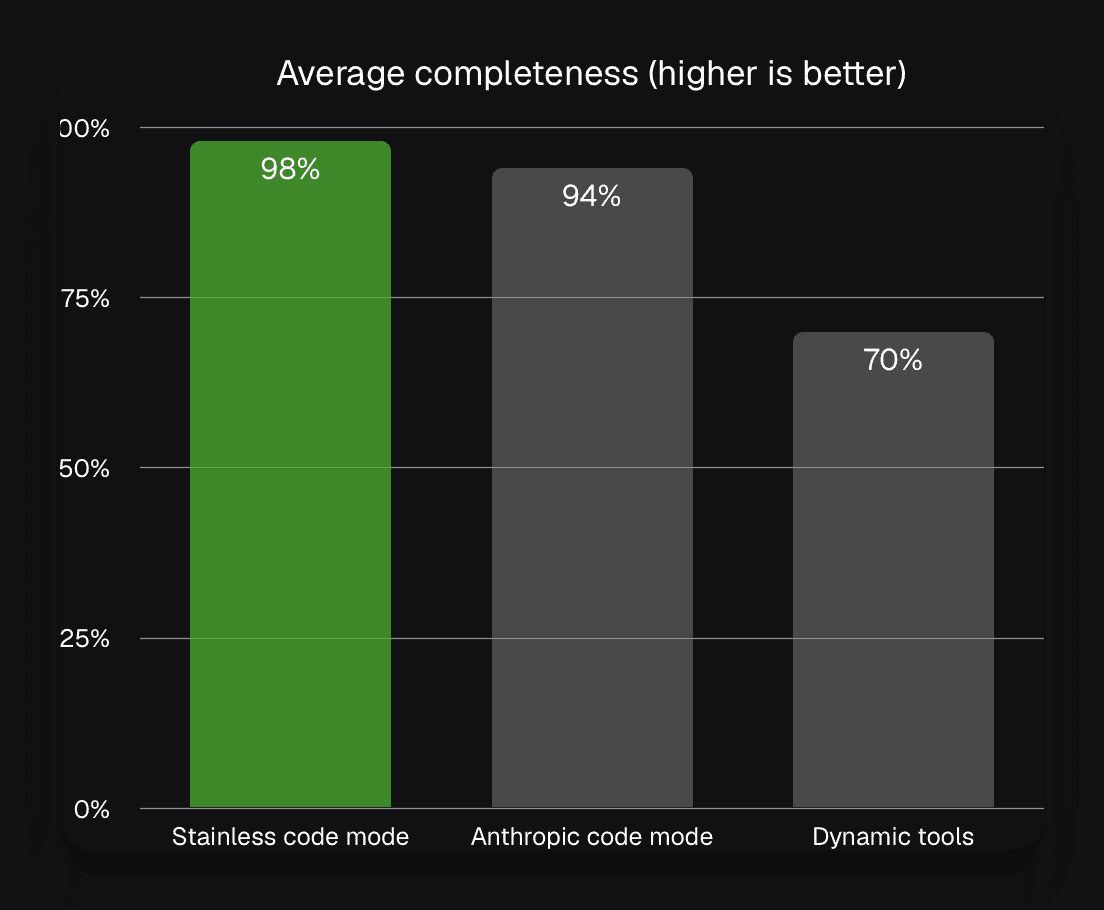

94–97%

task accuracy

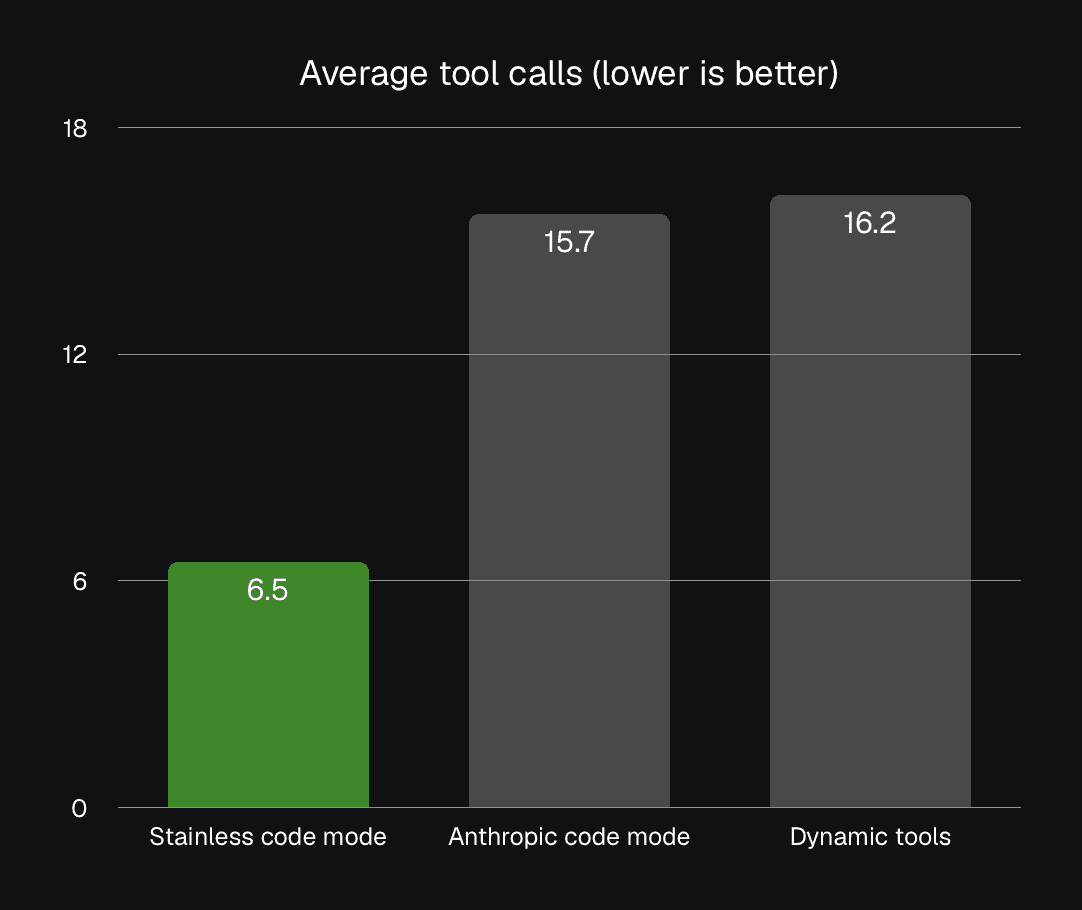

3x

fewer tool calls

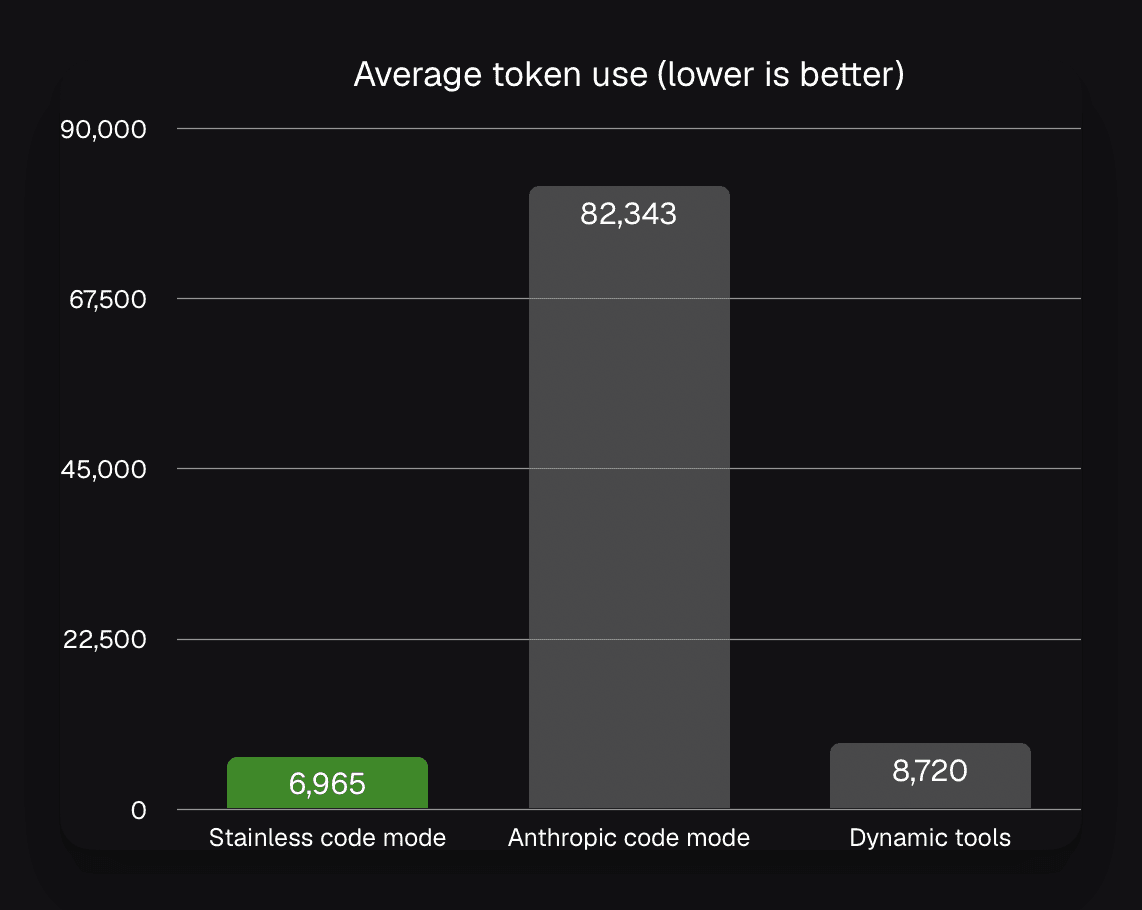

100k+

tokens saved on complex tasks

Higher accuracy. Fewer tokens. Faster results.

Stainless Code Mode outperforms in task accuracy, uses a fraction of the tokens by eliminating unnecessary data dumps, and drastically reduces the "thinking time" required for complex tasks.

Accuracy

Agents write code using the idiomatic Stainless-generated TypeScript SDKs. They get built-in auto-pagination, typed errors, and intuitive parameters: less trial-and-error and faster task completion.

Token use

We don't just give agents code tools; we give them the manual. The search_docs tool gives agents access to a comprehensive, up-to-date API reference tailored specifically to your SDK.

Duration

SDK code mode reduces the time agents need for complex API tasks. By allowing models to generate and execute SDK code with type hints and error feedback, agents complete workflows in fewer turns.

How it works

Connect and Initialize

The client connects to the MCP server, which advertises exactly two capabilities: search_docs and execute, plus any brief startup instructions (auth, SDK setup, common patterns).

Understand the user’s goal

The agent reads the user request and decides what information it needs (entities, resources, filters, desired output) before touching any tools.

Search the docs

The agent calls search_docs with a targeted query to retrieve the most relevant SDK methods, parameter shapes, and minimal examples.

Draft typed SDK code

Using the doc results, the agent writes a small TypeScript program that uses the generated SDK to perform the task, including pagination/looping, filtering, and aggregation as needed.

Typecheck, execute, and iterate

The agent sends the code to execute_code; the server typechecks first, runs in a sandbox, and returns results or actionable errors. If there’s an error, the agent fixes the code and retries.

Return the final answer

The agent summarizes the executed result into a user-friendly response, typically returning only the distilled outputs (counts, IDs, totals, links), not raw payloads.

FAQ

How does this differ from other MCP implementations?

Most MCP servers expose one tool per endpoint or use dynamic discovery. Code Mode exposes just two tools: execute and search-docs. Agents write code that calls your SDK directly. This prevents context overflow and eliminates multi-step discovery performance penalties.

Is code execution secure?

Yes. Code runs in isolated Cloudflare Workers per request. TypeScript analysis happens before execution. No code persists between requests.

What happens when my API changes?

Stainless provides automated GitHub workflows. When your OpenAPI spec changes, your MCP server regenerates automatically with deterministic, merge-conflict-free updates.

How do I deploy the MCP server?

Multiple options: publish as NPM package for local use, deploy to Cloudflare Workers with one-click deployment, or use Docker containers for self-hosting.

Does this work with my existing Stainless SDKs?

Yes. If you have Stainless-generated SDKs, add the MCP target to your config. Your SDK becomes immediately accessible to agents via Code Mode.

How does this handle large APIs with hundreds of endpoints?

The agent discovers only the methods it needs via doc search, rather than loading hundreds of schemas into context upfront. This keeps token usage and decision complexity constant, even as the API surface grows. In practice, performance scales with the size of the task, not the number of endpoints in the API.

Create a code mode MCP server for your API

Generate MCP servers where agents use your SDK directly. No tool explosion, no context bloat.